*bbbbzzzz* this blog has already failed the buzzword test.

- Maybe your ticketing system doesn’t support Machine Learning **bbzzzzzz** classification

- Maybe you want to apply some Machine Learning **bbzzzzzz** to an old piece of technology on your network

- Maybe you just want to apply Machine Learning **bbzzzzzz** using a dataset you can control and tweak

A real POC use case:

A MSSP is limited to 1 mailbox, and receives 150+ human written free-form emails a day covering all ticket types. An analyst who takes 1 minute to read+log+classify+prioritise+assign+move the original email is wasting two and a half hours a day (whilst suffering eye fatigue/burnout?). Or put another way, 70 hours a month!!

So how about a playbook to do the time wasting labour:

- Monitor an inbox near realtime

- Ingest all emails, and start a playbook I will call “ReCategorise”

- The email body passes through a learned dataset

- If >80% confidence match is made by FastText we have an answer

- If <80% we try simple keyword matching

- If nothing still matches we run a default playbook

- With this decision, we can reprocess the email into the correct playbook type

- Humans can correct any wrong prediction

- All these predictions/corrections are logged, so at the end of the month we can analyse/tweak any issues

The steps needed for this:

- Monitor an inbox to see the initial email

- Create a dataset for ML **bbbzzzzz** to read

- Write an integration that compares email to dataset

- Build this into a playbook workflow

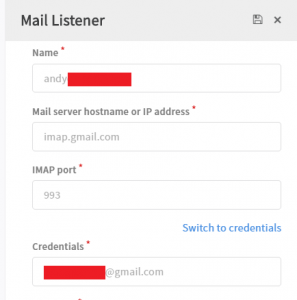

#1 Monitor an inbox

This is easy, just create a standard Mail Listener that reads new emails every x seconds

#2 Create a learned dataset

For a real POC I would download thousands of old categorised tickets (done by humans) and label these with the confirmed ticket type. However for this blog post I will create a simple model myself in “data.txt”.

__label__<Category> <text>

__label__Phishing account locked

__label__Phishing invoice.pdf

__label__Phishing account closed

__label__Phishing your purchase

__label__Phishing your delivery

__label__Phishing netflix

__label__Phishing bank account

__label__Phishing payment transfer

__label__DeviceLost I lost my phone on a bus

__label__DeviceLost my tablet was stolen

__label__DeviceLost I can’t find my laptop

__label__DeviceLost my desktop was stolen by aliens

__label__Enrichment have you seen this url before?

__label__Enrichment can you check this file hash

__label__Enrichment here is a suspicious IP address

__label__Enrichment this ioc looks bad

__label__Enrichment please enrich this attribute

Then setup the environment, Linux, Python and FastText (I chose the pyFastText implementation)

yum -y install gcc gcc-c++ build-essential redhat-rpm-config python-devel python-devel

curl “https://bootstrap.pypa.io/get-pip.py” -o “get-pip.py”

python get-pip.py

pip install –trusted-host pypi.python.org cython argparse

CFLAGS=”-Wp,-U_FORTIFY_SOURCE” pip install cysignals

pip install pyfasttext

This next Python script uses FastText to compile a binary file (which we use) and a vector file (which this use case won’t use). The main line is in Bold.

#!/usr/bin/env python

import json

import pyfasttext

from pyfasttext import FastTextdataSourceText = ‘./data.txt’

model = FastText(label=’__label__’)

dataOutModel = ‘./model’

model.supervised(input=dataSourceText, output=dataOutModel, epoch=100, lr=0.7)

When we execute this:

# ./myfasttext.py

Read 0M words

Number of words: 50

Number of labels: 3

Progress: 100.0% words/sec/thread: 962300 lr: 0.000000 loss: 0.735017 eta: 0h0m

# ls -lt

-rwxrwxrwx. 1 root root 45346 Oct 22 23:27 model.vec

-rwxrwxrwx. 1 root root 22165 Oct 22 23:27 model.bin

#3 Using Python build a SOAR wrapper Integration to ingest that .bin file and compare against the trained dataset

Thought I try and stay vendor neutral, this is written in Python for Demisto. Full yml file attached. The important coding bit is:

model = FastText()

model.load_model(__pathToModel.bin__)

modeloutput = model.predict_proba_single(email.body + ‘\n’, k=2)[0]

EntryContext = {‘fasttext’:{‘classification’:modeloutput[0], ‘confidence’:modeloutput[1]}}

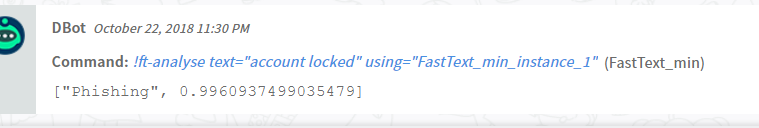

#4 Test the Integration manually

Pass the text “account locked” into FastText and check for the category decided, and the confidence of this decission. Here the FastText Integration correctly guesses Phishing, with 99.6% confidence (though keep in mind, for this blog post my learning data set is hilariously small).

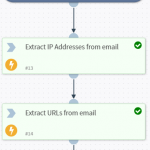

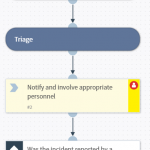

#5 Build a playbook that uses FastText as a primary comparison

“Analyse Email in FastText” (task #31) represents our above integration “ft-analyse”.

“Over 80% confidence” (task #33) asks whether FastText is confident in it’s prediction.

If this is over 80% confident, “What was the outcome” (task #34) looks at what this prediction was and take the appropriate workflow path. (click to enlarge):

#6 Run test emails through the playbook

First test – I have simulated a user forwarding a real phishing email to this playbook, containing the lines:

Is this real or phishing?[

Click here to verify your account<http://<removed>/membershipkey=343688408873184732/>

Failure to complete the validation process will result in a suspension of your netflix membership.

Netflix Support Team

And the playbook took this route (click to enlarge)….

Which in-turn automatically re-processed the ticket as phishing

Success!! that’s 1 minute saved on classifying the email (…fine and another 60 minutes saved on actually processing a Phishing email… but today is about ML **bbbzzz** and not SOAR as a whole, don’t take this away from me, I’m still proud of my 1 minute!).

Second test – I have created a small simple email simulating a lost device

I lost my laptop on the bus

Stupid bus

And the playbook took this route (click to enlarge)….

Which in-turn automatically re-processed the ticket as phishing

Then to add a cherry on top, lets assign to analyst based on some other criteria (I chose time of day).

The time taken to put this all together was 1-2 (plus another 1-2 days to get my head around FastText and pyFastText), but remember everyday we save 2hours 30mins of tedious work.

Whilst this playbook classifies inbound emails, we can have multiple datasets running at the same time classifying anything you have a dataset for. I’m sure you the reader could think of other use cases…

Andy